|

1/8/2024 0 Comments Cuda dim3 example

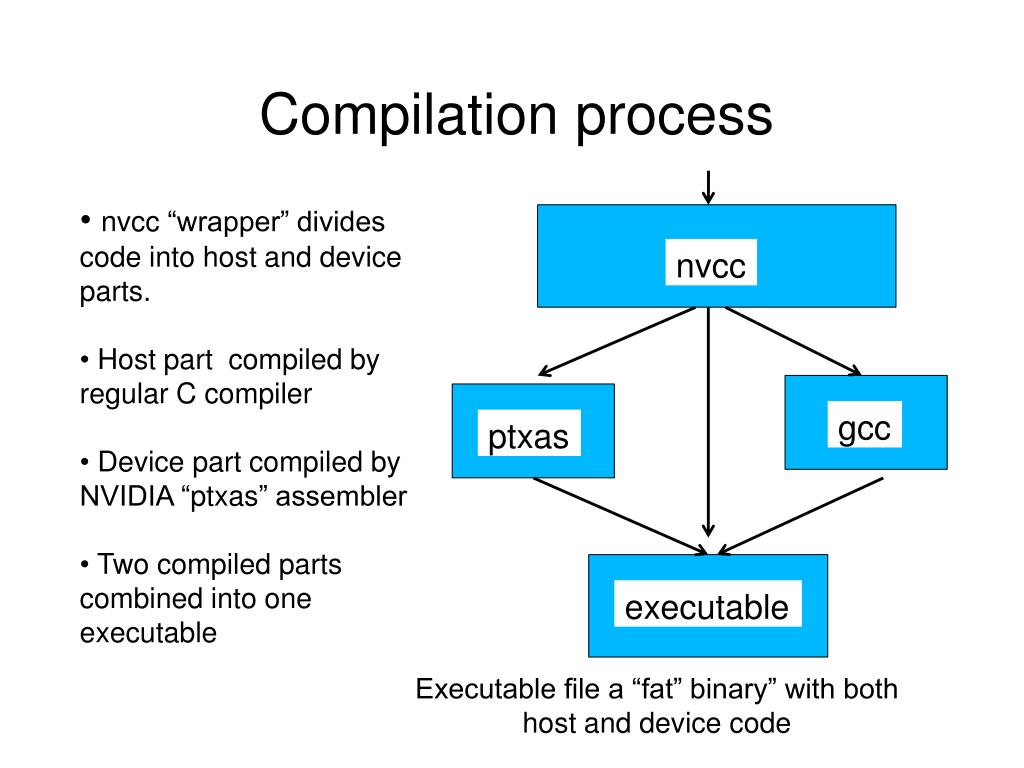

In CUDA there are multiple ways to achieve GPU synchronization.Write blockDim.x output elements to global memory.Read (blockDim.x + 2 * radius) input elements from global memory to shared memory.Data is not visible to threads in other blocks.Declare using _shared_, allocated per block.By opposition to device memory, referred to as global memory.Within a block, threads share data via shared memory.Data is shared between threads in a block.Use _shared_ to declare a variable/array in shared memory Int index = threadIdx.x + blockIdx.x * blockDim.x When we consider a thread block, threadIdx and blockDim standard variables in CUDA can be considered very important. One thread block consists of set of threads.Unlike parallel blocks, threads have mechanisms to efficiently: A block can be split into parallel threads.We will use 'blocks' and 'threads' to implement parallelism. GPU computing is about massive parallelism.The compute capability of a device describes its architecture, e.g. Device The GPU and its memory (device memory).Host The CPU and its memory (host memory).GPU Activity Monitor – NVIDIA Jetson TX Dev Kit :.Avoiding Pitfalls when Using NVIDIA GPUs :.An Easy Introduction to CUDA C and C++ :.Udacity - Intro to Parallel Programming.CUDA Zone – tools, training and webinars :.BOOK : Introduction to Parallel Computing.BOOK : Designing and Building Parallel Programs :.cudaMemcpy() vs cudaMemcpyAsync(), cudaDeviceSynchronize().Manage communication and synchronization._global_, >, blockIdx, threadIdx, blockDim.When using CUDA, developers program in popular languages such as C, C++, Fortran, Python and MATLAB and express parallelism through extensions in the form of a few basic keywords.ĬUDA accelerates applications across a wide range of domains from image processing, to deep learning, numerical analytics and computational science. In GPU-accelerated applications, the sequential part of the workload runs on the CPU – which is optimized for single-threaded performance – while the compute intensive portion of the application runs on thousands of GPU cores in parallel. With CUDA, developers are able to dramatically speed up computing applications by harnessing the power of GPUs. IntroductionĬUDA® is a parallel computing platform and programming model developed by NVIDIA for general computing on graphical processing units (GPUs). Nested loop indexing is oftenĪs simple when the array and loop extents are proportional.This repository contains notes and examples to get started Parallel Computing with CUDA. Here the array extents areĮqual to each loop's iteration count. The innermost loop body, in a cache friendly manner, incrementsĮach element of a6 a 6D array. The triple-chevron syntax are also available within the kernel using twoįurther built-ins, named gridDim and blockDim.ĬUDA's blockIdx and threadIdx variables are analogous to the Each of these data members will be less than itsĬorresponding kernel launch parameter. Zero-based x, y, and z data members of the uint3 built-in constants:īlockIdx and threadIdx.

Launched organised as a collection of 210 CUDA thread blocks,Įach thread in a CUDA grid is uniquely identified by the unsigned int

Of these is referred to as a CUDA grid, and set at runtime usingĬhevron syntax. GridDim, blockDim, blockIdx and threadIdx builtins of a CUDA grid.ĬUDA kernels are executed by numerous threads. Version, applicable to arbitrary dimension counts, is applied to the familiar The fold is first derived in Haskell, before the C++ Global linear index, using a left fold over a list of extent/index pairsĬorresponding to each dimension of a rectilinear structure such as a

In this post I'll introduce a C++ variadic function template to calculate a

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed